The Rise of the Resilient Supply Chain

The Covid-19 pandemic underscored the need for a resilient supply chain. New tools like digital supply chain twins can help supply chains achieve stochastic planning, which models uncertainty and provides a more accurate risk estimate. These tools can be key to making your supply chain more resilient.

In a rush? Here are the 5 key takeaways:

- → Supply chain resiliency can be achieved through redundancy (not necessarily effective) and flexibility.

- → Traditional planning tools are deterministic and cannot address the need for stochastic planning, which models uncertainty and is crucial for developing a resilient plan.

- → Axon’s digital supply chain twin uses probabilistic matching to link material flows across the end-to-end supply chain. It can crawl through many transactions to determine the current demonstrated flows and performance of the supply chain.

- → The digital supply chain twin built up through process mining can be used for stochastic planning, which involves the use of Monte Carlo simulation to run thousands of scenarios to arrive at a probability-to-execute score.

- → The PTE score measures the probability that the goal can be achieved, and it can be used to improve supply chain resiliency.

Resiliency Unlocked, The Precise Approach

The desire to create a resilient supply chain is not new. The naturalist and biologist Charles Darwin, for example, already had a lot to say on the topic in the 19th century: “It is not the strongest of the species that survive, nor the most intelligent, but the one most responsive to change.”

It is relatively easy to go back to the early 2010s and beyond and find a lot of material on the topic of supply chain resiliency. In 2005, MIT professor Yossi Sheffi wrote an article titled “Building a Resilient Supply Chain,” which appeared in the Sloan Management Review. It is an abstract of his book The Power of Resilience.

Sheffi, whose book is subtitled, “How the best companies manage the unexpected,” breaks supply chain resiliency into two alternatives—redundancy and flexibility.

1. Redundancy:

- The use of high safety stock and spare capacity, which is very expensive and not necessarily that effective.

2. Flexibility:

- Supply and procurement: Having alternative suppliers and flexible supply contracts.

- Conversion: Being able to manufacture the same product on multiple lines and even factories.

- Distribution and customer-facing activities: Customer segmentation and selected segment-based service.

- Control systems: Used primarily to detect change quickly. As Sheffi states, “The two principal functions of control systems are to detect a disruption quickly and to foster speedy corrective actions.”

- Cultural Change: This is closely related to control systems in that flexibility “requires a culture that allows ‘maverick’ information to be heard, understood and acted upon.”

Recent events, particularly the Covid-19 crisis, have brought the topic of resiliency back to the forefront, especially the “control system” option, otherwise known in supply chain circles as visibility (detection) and planning (correction). These are crucial for avoiding the use of redundancy to achieve resilience.

The need for Probabilistic Planning

Tim Payne at Gartner has been discussing planning for resilient supply chains for some time now. In an article titled, “Mastering Uncertainty: The Rise of Resilient Supply Chain Planning,” Payne states, “SCP has been driven by a deterministic planning paradigm since the invention of MRP.”

While he refers to MRP—the basis of nearly all planning tools—as being deterministic, he doesn’t explicitly mention that none of the existing planning tools can address the need for stochastic planning, which models the uncertainty. Without modeling stochasticity, there is little chance of developing a resilient plan.

Another dirty little secret he does not mention is that all existing tools base the plan on master data parameters that have been loaded from ERP systems. The quality of the master data is very questionable in many cases; so, garbage in, garbage out, compounding the poor quality of the plan.

Let me be clear, range forecasting is not the same as stochastic planning. Undoubtedly, range forecasting is better than a one-number forecast, but it completely misses the uncertainty on the supply side, which in turn gives a false sense of confidence to the supply chain organization that the range forecast can be achieved.

Because traditional planning tools are deterministic, they cannot give a risk estimate associated with a plan. Bringing this up indirectly by referring to the need to deploy new tools to achieve resiliency, ergo the existing tools cannot get us there.

Along came a solution

Enter Axon. The first thing that Axon does is crawl through many transactions to determine the current demonstrated flows and performance of the supply chain. This is in sharp contrast to the use of the ERP master data by other planning tools. In reality, Axon goes beyond traditional process mining tools such as Celonis, UIPath, Signavio, Minit and others by using probabilistic matching to link material flows across the end-to-end supply chain. This is a great opportunity for the primary value of process mining, namely Business Process Improvement (BPI), including compliance and conformance.

(*) The names of the facilities and the products are blocked out for reasons of confidentiality.

The diagram above clearly shows the flow of materials through two different production lines at a facility before flowing into the distribution process at the bottom of the diagram (*). There is no limit to the number of supply chain variants that Axon can capture.

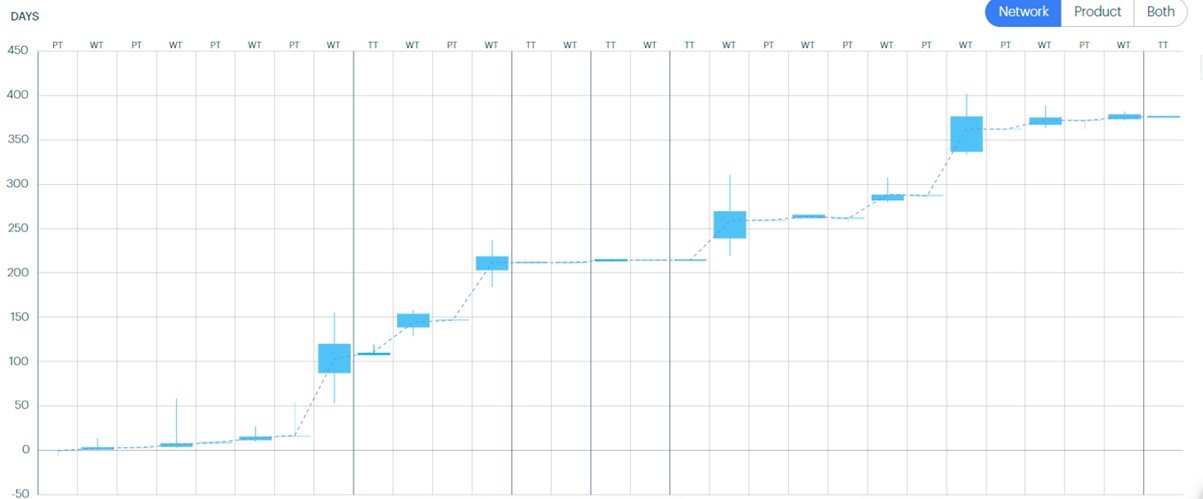

Nearly everyone is interested in performing value stream analysis of their supply chains. The diagram below shows a multi-stage supply chain, where stages are marked by the darker vertical line, in which the process (PT), wait (WT) and transit (TT) times have been analyzed over a given period. The ladder diagram below, common to value stream mapping, is a great way of visualizing where process improvements can be achieved. Notice that each of the times is plotted as a box plot to give a sense of variability.

Though time is an obvious variable to capture cost and quality, other variables can also be captured.

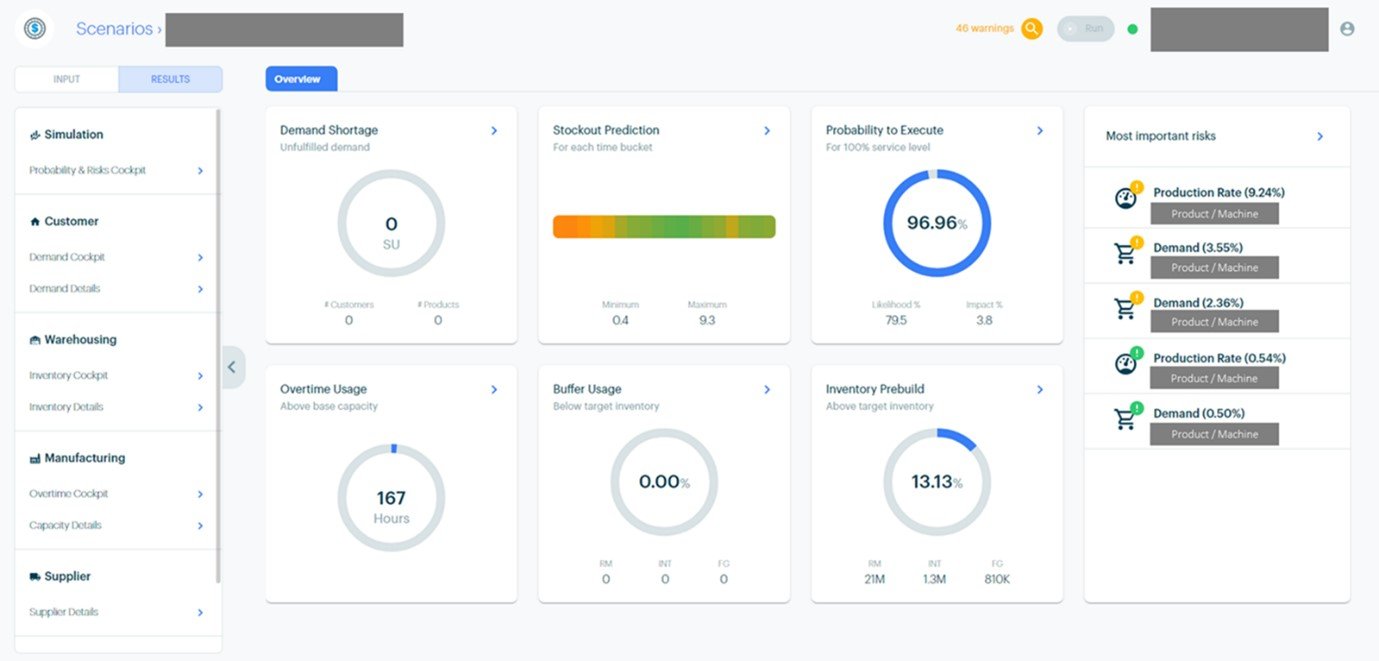

BPI, while important, does not get my juices flowing. The part that does excite me? The digital supply chain twin built up through the first stage of process mining can now be used for stochastic planning. As described by Payne, this is the use of Monte Carlo simulation to run thousands of scenarios that sample from both the demand and supply side distributions of key variables to arrive at a probability-to-execute (PTE) score, which measures the probability that the goal can be achieved. In this case, the goal is a 90% customer service level. The PTE score is 97%, so the expected customer service level is around 87%. However, there is quite a high likelihood (79.5%) that the target customer service level will not be achieved, the average impact being 3.8%.

That was a lot of numbers. Let’s focus on the action now. Are you interested to hear and see more about how resilient supply chain planning could be a game-changer for your company?